Table of Contents

#Kubernetes Platform Series

- Part 1: Kubernetes Self-service

- Part 2: Kubernetes Multi-tenancy

- Part 3: Kubernetes Cost Optimization with Virtual Clusters

Multitenancy has long been a core capability of cloud computing, and indeed, for virtualization in general.

With multitenancy, everybody has their own sandbox to play in, isolated from everybody else’s sandbox, even though beneath the covers, they share common infrastructure.

Kubernetes offers its own kind of multitenancy as well, via the use of namespaces. Namespaces provide a mechanism for organizing clusters into virtual sub-clusters that serve as logically separated tenants.

Relying upon namespaces to provide all the advantages of true multitenancy, however, is a mistake. Namespaces are for cloud native teams that don’t want to step on each other’s toes – but who are all colleagues who trust each other.

True multitenancy, in contrast, isn’t for colleagues. It’s for strangers – where no one knows whether the owner of the next tenant over is up to no good. Kubernetes namespaces don’t provide this level of multitenancy.

#Multitenancy: A Quick Primer

Multitenancy is most familiar as a core part of how cloud computing operates. Everybody’s cloud account is its own separate world, complete in itself and separate from everybody else’s. You get your own login, configuration, services, and environments. Meanwhile, everybody else has the same experience, even though under the covers, the cloud provider runs each of these tenants on shared infrastructure.

There are different flavors of multitenancy, depending upon just what infrastructure they share beneath this abstraction.

IaaS tenants, aka instances or nodes, share hypervisors that abstract the underlying hardware and physical networking. Meanwhile, SaaS tenants, for example, Salesforce or ServiceNow accounts, might share database infrastructure, common services, or other application elements.

Either way, each tenant is isolated from all the others. Isolation, in fact, is one of the most important characteristics of multitenancy, because it protects one tenant from the actions of another.

To be effective, the infrastructure must enforce isolation at the network layer. Any network traffic from one tenant that is destined for another must leave the tenant via its public interfaces, traverse the network external to the tenants, and then enter the destination tenant through the same interface that any other external traffic would enter.

Even when the infrastructure provider decides to offer a shortcut for such traffic, avoiding the hairpin to improve performance, it’s important for such shortcuts to comply with the same network security policies as any other traffic.

#Using Kubernetes Namespaces for Multitenancy

Namespaces have been around for years, largely as a way to keep different developers working on the same project from inadvertently declaring identical variable or method names.

Providing a scope for names is also a benefit of Kubernetes namespaces, but naming alone isn’t their entire purpose. Kubernetes namespaces are also virtual clusters within the name physical cluster.

This notion of virtual clusters sounds like individual tenants in a multitenant cluster, but in the case of Kubernetes namespaces, they have markedly different properties.

Kubernetes logically separates namespaces within a cluster but allows for them to communicate with each other within the cluster. By default, Kubernetes doesn’t offer any security for such interactions, although it does allow for role-based access control (RBAC) in order to limit users and processes to individual namespaces.

Such RBAC, however, does not provide the network isolation that is essential to true multitenancy. Furthermore, Kubernetes doesn’t implement any privilege separation, instead delegating such control to a dedicated authorization plugin.

Furthermore, Kubernetes defines cluster roles and their associated bindings, thus empowering certain individuals to have access and control over all the namespaces within the cluster. Not only do such roles open the door for insider attacks, but they also allow for misconfigurations of the cluster roles that would leave the door open between namespaces.

If cluster roles weren’t bad enough, Kubernetes also allows for privileged pods within a cluster. Depending upon how admins have configured such pods, they can access any node-level capabilities for the node hosting the cluster. For example, the privileged pod might be able to access file system, network, or Linux process capabilities.

In other words, a privileged pod can impersonate the node that hosts its cluster – regardless of what namespaces run on that cluster or how they’re configured.

#‘True’ Kubernetes Multitenancy that Provides Isolation between Tenants

In order to implement secure Kubernetes multitenancy, it’s essential to use a tool like Loft Labs to implement virtual clusters within Kubernetes clusters that act just like real clusters.

With this ‘true’ multitenancy, traffic from one virtual cluster to another must go through the same access controls as any cluster-to-cluster traffic would – because fundamentally, Loft Labs handles traffic between virtual clusters just as Kubernetes would handle traffic between clusters.

One of the primary benefits of this approach to Kubernetes multitenancy is that virtual clusters support namespaces just as clusters do – not necessarily for isolation (as namespace isolation is inadequate), but for the name scoping that namespaces are most familiar for.

Loft Labs’ multitenancy provides other benefits that namespaces cannot, for example, the ability to spin down individual virtual clusters for better cost efficiency.

#The Intellyx Take

The best way to think about multitenancy options for Kubernetes is this: namespaces are for friends, while Loft Labs’ multitenancy is also for strangers.

Using namespaces for multitenancy works best when securing traffic between tenants is a non-issue, say, when all the developers using the cluster are on the same team and actively collaborating.

True multitenancy of the sort Loft provides, in contrast, provides virtual clusters that separate teams can use – even if those teams aren’t collaborating, or indeed, don’t know or trust each other at all.

This zero-trust approach to sharing resources is fundamental to modern cloud native computing, even in situations where people are all working for the same company.

Not only does such isolation add security, but it also enforces GitOps-style best practices for how multiple teams can work on the same codebase in parallel.

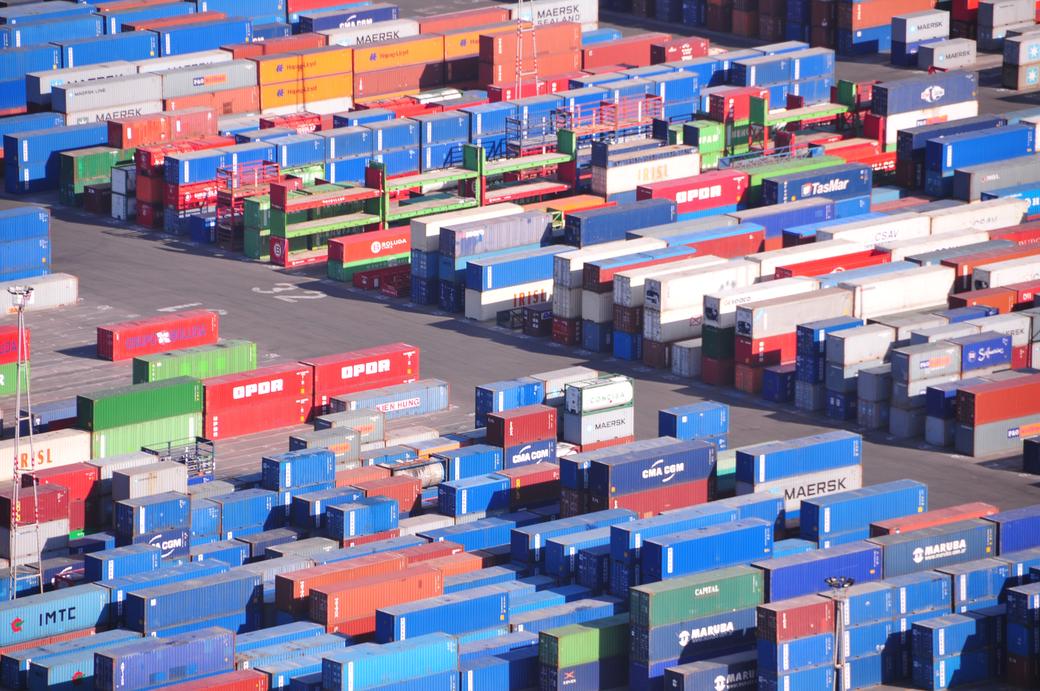

Copyright © Intellyx LLC. Loft Labs is an Intellyx customer. Intellyx retains final editorial control of this article. Photo by Mitul Shah from Burst